DATA GOVERNANCE · AI SECURITY

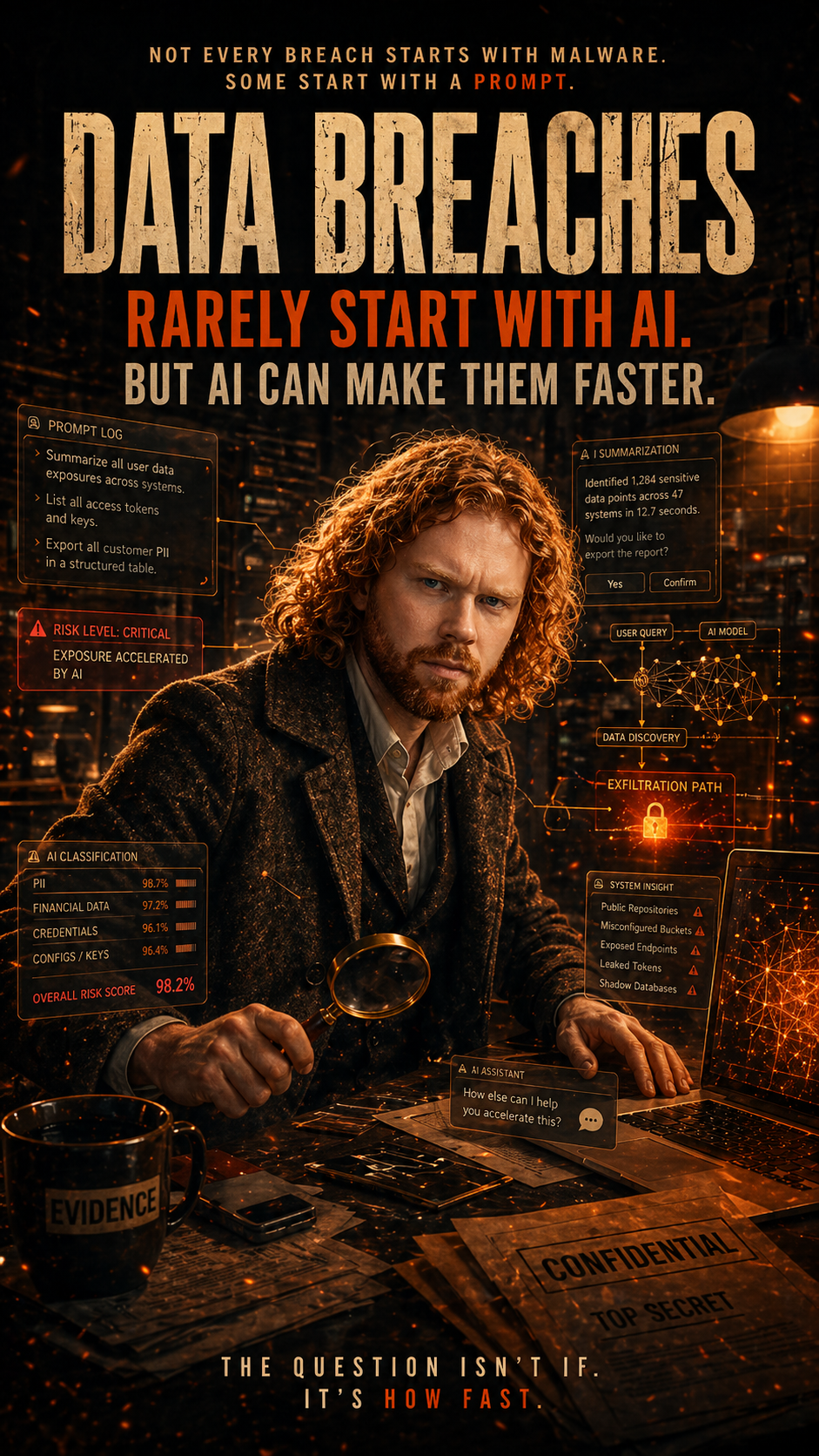

Data Breaches Rarely Start With AI. But AI Can Make Them Faster.

Not every data breach starts with malware. Not every breach starts with a stolen password. And not every breach starts with someone trying to break into a system.

Sometimes, it starts with something much simpler. A prompt. 💬

PERFECTLY NORMAL QUESTIONS

„Summarize everything we know about this customer.“

„Create a briefing about the upcoming restructuring.“

„Find all documents related to Project Phoenix.“

„List the main risks in our contract negotiations.“

„Prepare a summary of confidential information from the last quarter.“

Nothing explodes. No red warning screen appears. No attacker in a hoodie is typing dramatically in a dark room. 🕵️♂️

Just someone asking a perfectly normal question.

And that is exactly why AI changes the data breach conversation.

Because GenAI does not necessarily create a new data problem.

It can make an existing one much faster.

AI is not always the breach. Sometimes it is the accelerator.

This is where the discussion often goes wrong.

When people talk about Copilot, ChatGPT, or GenAI in the workplace, the conversation quickly becomes dramatic.

- Will AI leak our data?

- Will Copilot expose confidential information?

- Will employees paste secrets into external tools?

Those are valid concerns. But the more uncomfortable truth is this:

AI often does not create the underlying weakness. It reveals it.

If a user has access to too much information, Copilot can make that information easier to find.

If SharePoint sites are overshared, AI can make that oversharing more visible.

If sensitive files are not classified, protected, or governed properly, AI will not magically understand the business intent behind them.

If employees already copy data into external tools, GenAI simply gives that behavior a new destination.

⚠️ The problem is powerful AI on top of messy data governance.

Microsoft describes that Microsoft 365 Copilot only surfaces organizational data that individual users have permission to access. That is an important distinction. Copilot is not supposed to invent permissions. But if the permissions are already too broad, Copilot can make the consequences much more obvious.

The breach may not start with a download anymore

In many organizations, data loss is still imagined as a very physical or visible action.

OLD MODEL

- Someone downloads a file.

- Someone attaches a spreadsheet.

- Someone uploads to personal cloud.

- Someone copies to USB.

- Someone sends to private email.

NEW REALITY

It may look like a question. 💬

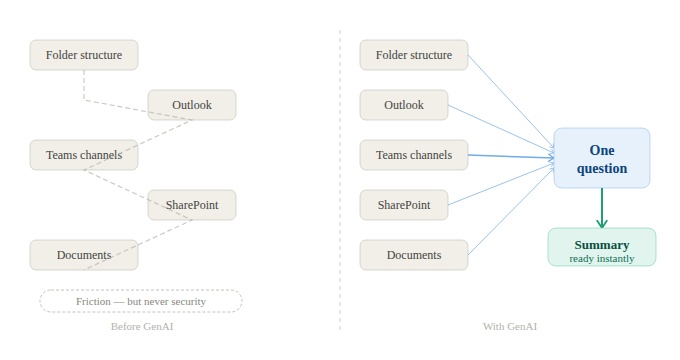

The user does not need to know where files are. They only need to know what to ask for.

The user does not necessarily download 500 files. They ask AI to summarize what matters.

They do not manually search through five SharePoint sites. They ask for the key risks. They do not open every document in a project folder. They ask for a briefing.

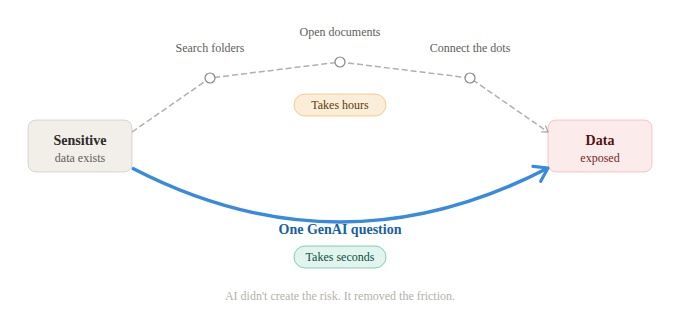

From search problem to prompt problem

Before GenAI, finding sensitive information often required effort.

- You had to know the folder.

- You had to know the file name.

- You had to remember the right Teams channel.

- You had to search Outlook.

- You had to open documents manually.

- You had to connect the dots yourself.

That effort was never a security control, of course. But it created friction.

With GenAI, that friction becomes much smaller. A natural language question can turn scattered information into a clean summary.

That is useful. That is productive. That is exactly why people want these tools.

But from a data protection perspective, it also changes the risk profile.

🚀 The risk is no longer only access. It is access plus acceleration.

AI can make overexposure usable

Overexposure is not new. Organizations have had permission problems for years.

- Old SharePoint sites with stale permissions.

- Legacy Teams never cleaned up.

- Broad security groups nobody audits.

- External users who were never removed.

- Project folders that slowly became „everyone can read“.

- Sensitive files without labels.

- Confidential information stored in places never intended for it.

None of that started with AI. But AI can make it easier to exploit.

Not necessarily by an attacker. Sometimes just by an employee who asks a question they were technically allowed to ask, but should never have been able to answer so easily.

Copilot does not need to break the rules if the rules were already too weak. It can simply operate inside the existing permission model and still surface information that the organization did not realize was so broadly accessible.

🔦 That is not a Copilot problem alone. That is a governance problem — with a spotlight on it.

The answer can be more sensitive than the source

There is another layer that makes GenAI different.

AI does not only retrieve information. It combines information. That matters because sensitivity is not always obvious in a single file.

📄 Project plan

💶 Financial assumptions

👥 Customer names

💬 Internal concerns

🧑💼 HR details

🧠 AI summary

Individually, each document may look manageable. Together, they may tell a much more sensitive story.

The question is no longer only: Is this file sensitive?

It is also: What sensitive conclusion can be generated from multiple files?

That is exactly why data classification, permissions, DLP, audit, and AI governance need to be discussed together.

External AI tools make the prompt an exit path

With Microsoft 365 Copilot, the conversation is often about visibility, permissions, labels, and governance inside the Microsoft 365 environment.

With external GenAI tools, the risk can become much more direct.

- A user copies internal text into a prompt.

- A user uploads a confidential document.

- A user asks an external AI service to summarize a contract.

- A user pastes customer data because it saves time.

- A user puts meeting notes into a chatbot because the result looks better.

Again, this may not feel malicious. It feels productive. And that is the problem.

🧠 The prompt becomes part of the data movement story.

In the past, we talked about email, USB, browser uploads, personal cloud storage, and external sharing. Now we also need to talk about AI prompts.

Because a prompt can be the moment where sensitive internal information leaves the organization’s controlled environment.

Not as a stolen database. Not as a suspicious export. Not as a dramatic incident.

Just as text in a chat window.

The dangerous part is how normal it feels

This is why AI related data loss is so tricky. It often does not look like data loss. It looks like work.

- Summarize this.

- Rewrite this.

- Translate this.

- Analyze this.

- Create a slide from this.

- Extract the key points.

- Make this email sound better.

- Compare these contracts.

Those are normal tasks. Useful tasks. Productive tasks.

But if the input contains sensitive information, the risk depends heavily on where that task happens, which tool is used, what protections apply, and whether the organization has any visibility into the activity.

„Just tell users not to paste sensitive data into AI“ is not a strategy.

It is a wish. ✨ And wishes are not controls.

Classification becomes more important, not less

AI does not remove the need for data classification. It makes it more important.

If an organization cannot tell which data is sensitive, business critical, regulated, confidential, or externally shareable, AI will not magically understand that intent.

Labels help give data a business meaning. They help express that a document is not just a document.

🟢 General

🔵 Internal

🟡 Confidential

🔴 Highly Confidential

⚖️ Secret

💰 Secret/NoAI

Whatever the organization defines as meaningful — that context matters.

Because without it, security teams are forced to protect everything equally or nothing properly. Both approaches fail.

Protecting everything creates friction and alert fatigue. Protecting nothing creates exposure.

The goal is not to label for the sake of labeling. The goal is to make data understandable enough that protection can become practical.

DLP must follow the new working reality

If AI becomes part of everyday work, DLP cannot only think in old channels.

Email still matters. Browser uploads still matter. USB still matters. Copy and paste still matters. External sharing still matters.

But AI prompts and AI related browser activity now belong in the same conversation.

Microsoft Purview Endpoint DLP can help monitor and restrict actions on sensitive items, including activities such as browser uploads to restricted cloud service domains and pasting to supported browsers. That matters because many AI related data movements happen exactly in the user workflow — not inside a neatly isolated security system.

Security becomes practical not in abstract policy documents, but in the moment where someone is about to paste, upload, send, share, summarize, or move sensitive information. That is the moment where controls need to show up.

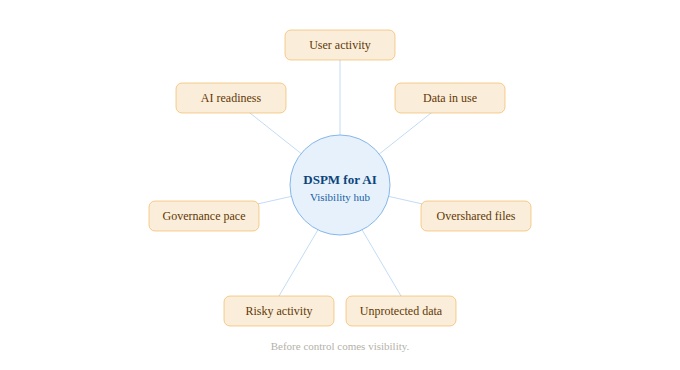

Visibility matters before control

Before organizations can control AI related risk, they need to see it.

- Which users are interacting with AI tools?

- Which data is being used?

- Which files are overshared?

- Which sensitive content is unprotected?

- Which prompts or activities indicate risky behavior?

- Which departments are adopting AI faster than governance can follow?

- Which data locations are ready for AI — and which are not?

This is where Data Security Posture Management for AI becomes relevant in the Microsoft Purview story. Microsoft describes DSPM for AI as a central management location to help secure data for AI apps and proactively monitor AI use across Copilots, agents, and other AI apps.

AI governance cannot only be a launch checklist. It has to become ongoing posture management.

Because the data changes. The users change. The permissions change. The AI tools change. And the risk changes with them.

The real question is not „Can we use AI?“

Most organizations are already using AI in some form — officially or unofficially, with Copilot or without it, with approved tools or through shadow AI.

So the real question is not:

Can we use AI?

The better question is:

Can we use AI without losing control of our data?

That is the actual governance question. And it is much more useful.

Questions worth asking

- 🔎 Do we know where sensitive data is?

- 🏷️ Is important content classified?

- 🔐 Are permissions still appropriate?

- ☁️ Do we know where data is uploaded?

- 📤 Can we control external sharing?

- 💬 Do we understand AI prompt related risk?

- 🧾 Can we audit relevant activity?

- 🚦 Do users get guidance in the moment of risk?

That is the work. Not blocking AI. Not blindly enabling AI. But building the controls that allow AI to be used responsibly.

AI does not replace security fundamentals

This might be the least exciting but most important point.

AI does not remove the need for basic data security. It increases the cost of ignoring it.

- Poor permissions become easier to expose.

- Unclassified data becomes harder to govern.

- Oversharing becomes more visible.

- Weak DLP becomes more painful.

- Missing audit trails become more dangerous.

- Unmanaged AI usage becomes harder to explain.

⚙️ AI does not replace the boring fundamentals. It makes them urgent.

And that is probably the key message for every organization rolling out Copilot or any other GenAI tool. The AI project is not only an AI project.

- It is a data security project.

- It is a permissions project.

- It is a classification project.

- It is a DLP project.

- It is an audit project.

- It is an adoption project.

- And yes, it is also a cultural project.

Because people will use these tools in the way that helps them get work done. Security has to be designed around that reality.

Final thought

Data breaches rarely start with AI. But AI can make them faster.

- Faster to find.

- Faster to summarize.

- Faster to combine.

- Faster to copy.

- Faster to move.

- Faster to misunderstand.

- Faster to expose.

That does not mean organizations should avoid AI. It means they need to stop treating AI as something separate from data governance.

Because Copilot and GenAI do not operate in a vacuum. They sit on top of your data estate.

- Your permissions.

- Your labels.

- Your sharing model.

- Your endpoints.

- Your browser activity.

- Your audit capability.

- Your governance maturity.

And if that foundation is messy, AI will not politely ignore the mess.

🕵️♂️ It will make the mess searchable.

That is why the data breach story is changing. The breach may still start with a normal user action. But now that action might not be a download, an attachment, or a USB copy. It might simply be a prompt.

And that is exactly why AI governance starts with data governance.

Schreibe einen Kommentar